Since Mattermost released a new version with a lot of bug fixes, features and security enchantments i decided to release a second version for Mattermost with ADFS integration. This is a modified version of the May 17, 2016 stable Mattermost release v3.0.2

The advantages of using ADFS over other methods:

- True SSO

- Much more secure then LDAP or gitlab with LDAP

- Proven for Enterprise

We have also made sure that the following features are available:

- Other domains and forest can also use Mattermost if invited and a trusts exists

- Authentication is based on AD SID so if a user is deleted or leaves the company a new user with the same domain username will get a new account with a different username. This is very important as it insures that users are unique and that even if you have two users with the same usernames in different domains they will each get there unique username and not effect one another.

- Please note that emails do need to be unique, if a user tries to register with an email which is already in the system they will get an error informing them that a user already exists.

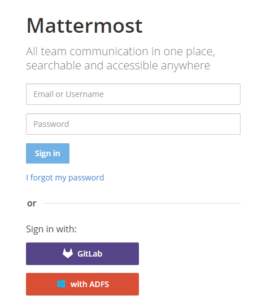

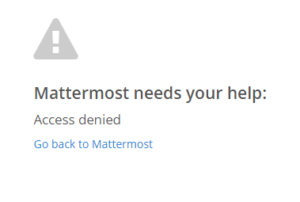

- Visual error message if user is denied access from ADFS (Added on 21/06/2016)

Here is the guide on where to get it and how to configure it:

You will first need to download/compile and install the new version which can be found below:

You can download the compiled version from form https://github.com/lubenk/platform/releases or here:

Linux: https://gi-architects.co.uk/wp-content/uploads/2016/05/mattermost-team-linux-amd64.tar.gz

OSX: https://gi-architects.co.uk/wp-content/uploads/2016/05/mattermost-team-osx-amd64.tar.gz

Windows: https://gi-architects.co.uk/wp-content/uploads/2016/05/mattermost-team-windows-amd64.tar.gz

You can get the code from: https://github.com/lubenk/platform/tree/ADFS-3.0.2

Now that you have a working copy it’s time to configure ADFS 3.0 for OAUTH2.0 please use the instructions on : https://gi-architects.co.uk/2016/04/setup-oauth2-on-adfs-3-0/

with the following additions notes:

ClientID : Just generate one at https://www.guidgenerator.com/online-guid-generator.aspx (please make sure this guid is either more then or less then 26 characters).

Redirect URI : https://mattermost.local/signup/adfs/complete (where mattermost.local is the dns address of your mattermost app)

Relaying party identifier: you can just use your dns address of your mattermost app

The following Claim setup, please make sure the claims are exact, the rules name can be anything:

Once you setup adfs you need to configure mattermost, you can either do this via the config.json or via the admin interface as show below:

Please make sure you copy the public key of the ADFS root CA of your Service Communications Certificate in PEM format (the format that has —-BEGIN CERTIFICATE—- in it) into /usr/local/share/ca-certificates and name it with a .crt file extension, then run “sudo update-ca-certificates”.

You also need the public key of the signing certificate in PEM format somewhere on the server which you will need to reference in the settings.

And that is it you should have a working version with ADFS

Additional Update (21/06/2016)

I have coded in an error checking method if you deny access from the ADFS side so now it will display a nice message as show above.

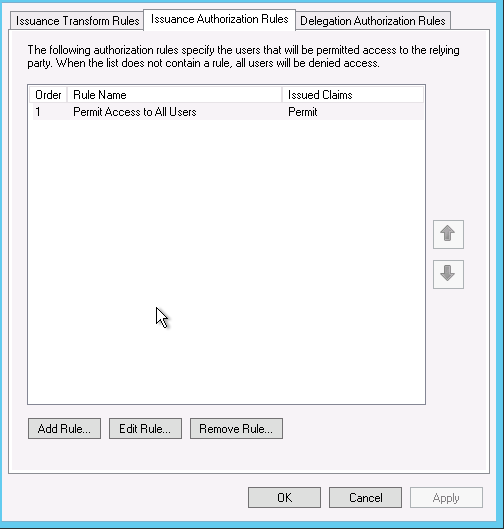

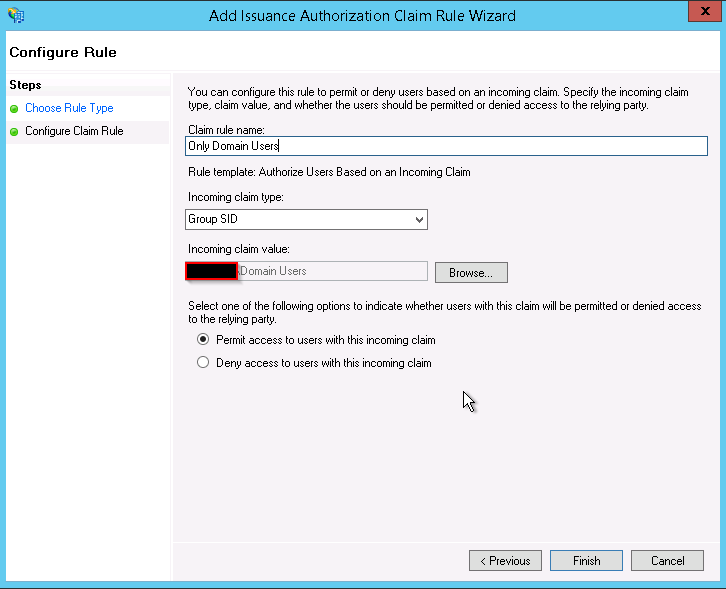

If you want to configure ADFS to deny access for users based on group or email or other variables you can easily do by:

Go into you Mattermost reply party and edit the claims, once in go to Issuance Authorization Rules and delete the default one which permits access for everyone.

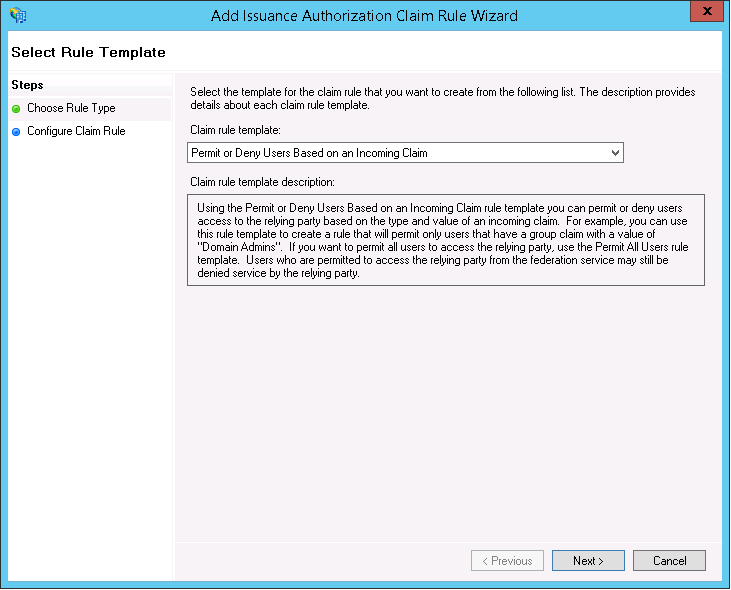

Once deleted add a new Rule based on “Permit or Deny Users Based on an Incoming Claim”

And chose the type of filtering for example i chose based on group membership and then allow.

You can create multiple rules as well as create deny rule, just make sure you order them correctly.